The nighttime crash, which saw the pedestrian killed after being hit at 39 mph, “was the last link of a long chain of actions and decisions made by an organization that unfortunately did not make safety the top priority,” the NTSB statement reads, adding that the crash could likely have been avoided if the driver hadn’t been “visually distracted” in the moments leading up to the collision.

The tragic incident had fueled doubts about the safety of fully autonomous vehicles, with 60 percent of the respondents in a YouGov poll conducted earlier this year saying they would feel somewhat or very unsafe as a pedestrian in a city with self-driving cars.

The NTSB criticized the lack of federal safety standards and assessment protocols for automated driving systems and recommended that each entity wishing to test such a system on public roads should be required to submit safety self-assessment plans to the National Highway Traffic Safety Administration including for example the monitoring of test drivers to ensure their attentiveness.

While self-driving cars are not allowed to operate outside of test programs in any country in the world as of today, many cars are already equipped with hardware that would theoretically allow them to drive autonomously.

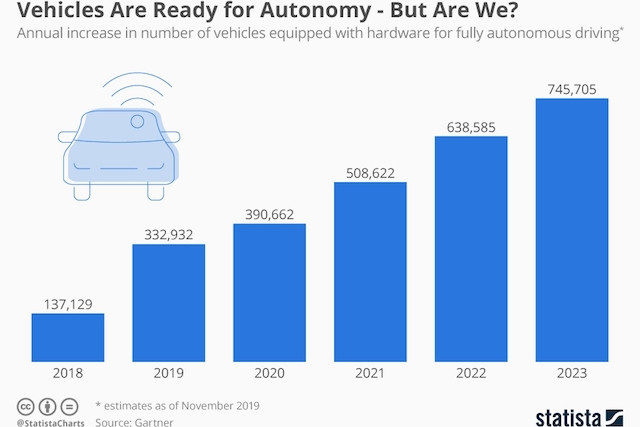

According to a recent Gartner forecast, roughly 330,000 “autonomous-ready” vehicles will hit the streets worldwide in 2019, with that number expected to climb to nearly 750,000 by 2023. Once autonomous driving software is ready for the real world and regulatory issues have been solved, these cars could be enabled to operate at higher levels of autonomy by ways of a simple software update.

For autonomous vehicles to gain the trust of consumers however, it won’t be enough for them to be as good or just slightly better than human drivers, Michael Ramsey, senior director analyst at Gartner, says: “From a psychological perspective, these vehicles will need to have substantially fewer accidents in order to be trusted.”